Duplicate Content SEO: How to Identify, Manage, and Prevent Content Duplication

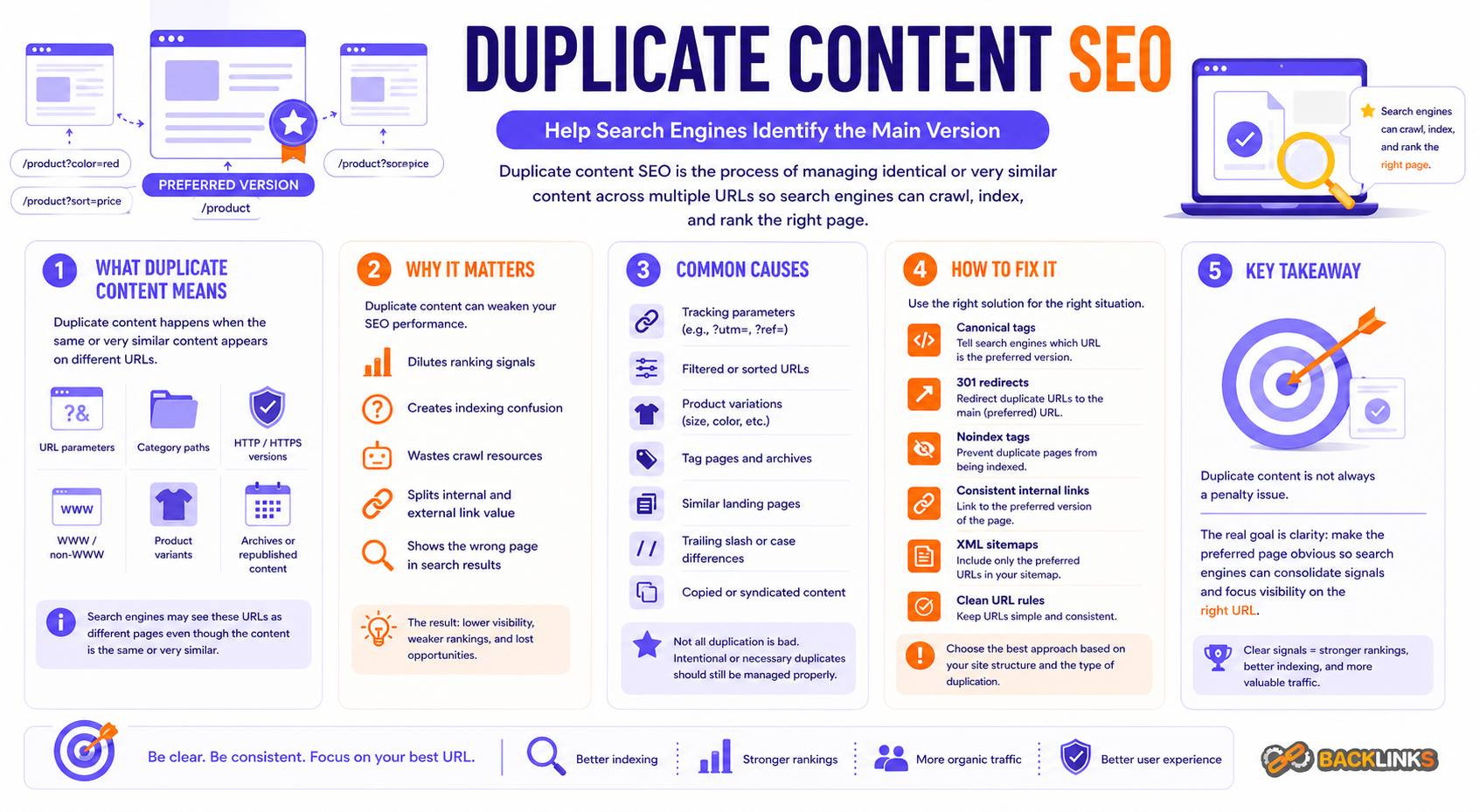

Duplicate content SEO refers to the process of identifying and managing identical or very similar content that appears across multiple URLs. It is a common technical SEO challenge that can affect how search engines crawl, index, and rank your website.

Duplicate content does not always result in a penalty, but it can create confusion. When multiple pages show the same or very similar content, search engines must decide which version to index and rank. This can lead to diluted ranking signals, inefficient crawling, and missed opportunities for organic visibility.

This guide explains what duplicate content is, why it matters, how it happens, and how to handle it effectively.

What Is Duplicate Content?

Duplicate content refers to blocks of content that are identical or substantially similar across different URLs. These URLs can exist on the same website or across different websites.

In practical terms, duplicate content means search engines are seeing multiple versions of the same information and must choose which one represents the “main” page.

For example, these URLs may show the same content:

example.com/page/example.com/page/?ref=adsexample.com/category/page/www.example.com/page/http://example.com/page/

Even though the content is the same, the URLs are different. Without clear signals, search engines may treat them as separate pages.

Duplicate content can also exist across domains, such as syndicated articles, copied product descriptions, or similar landing pages.

Why Duplicate Content Matters for SEO

Duplicate content matters because it can weaken SEO performance in several ways.

First, it can dilute ranking signals. If backlinks, internal links, and relevance signals are split across multiple URLs, no single page may perform as well as it could.

Second, it can create indexation issues. Search engines may index the wrong version of a page or ignore important pages altogether.

Third, it can waste crawl resources. Crawlers may spend time processing duplicate pages instead of discovering new or valuable content.

Finally, it can create a poor user experience. Users may encounter multiple versions of similar pages, inconsistent URLs, or outdated versions of content.

Duplicate content SEO is not about avoiding similarity entirely. It is about controlling which version of content should be treated as the main one.

How Duplicate Content Happens

Duplicate content is often created unintentionally through normal website functionality.

URL Variations

Many websites generate multiple URL versions for the same page. This can happen through:

- Tracking parameters

- Sorting and filtering options

- Session IDs

- Uppercase and lowercase variations

- Trailing slash inconsistencies

- HTTP and HTTPS versions

- WWW and non-WWW versions

Without proper control, these variations can create multiple crawlable URLs with the same content.

Ecommerce and Product Pages

Ecommerce websites frequently create duplicate content through:

- Product variants (size, color, model)

- Multiple category paths for the same product

- Filtered category pages

- Sorting options

- Pagination

For example, a product may appear under several categories, each with a different URL.

CMS and Content Management Systems

Content management systems can create duplicates through:

- Tag pages and category pages

- Author archives

- Date-based archives

- Pagination

- Print-friendly pages

Some of these pages may have value, but others may create unnecessary duplication.

Content Syndication and Republishing

Content may appear on multiple websites when it is syndicated or republished. This is common in news, blogging, and partnerships.

If multiple versions exist, search engines must decide which one is original or most relevant.

Internal Duplication

Internal duplication happens when similar pages target the same topic with only minor differences. This can occur when websites create multiple landing pages with overlapping intent.

This is sometimes confused with keyword targeting, but it can lead to competing pages instead of one strong page.

Duplicate Content vs Thin Content

Duplicate content and thin content are different issues, but they can overlap.

Duplicate content means multiple pages share similar content. Thin content means a page does not provide enough value or depth.

A page can be both thin and duplicate, such as multiple low-value category pages with minimal content. However, a well-written page can still be considered duplicate if it closely matches another page.

Understanding the difference helps determine the correct solution. Duplicate content needs consolidation or canonicalization. Thin content may need improvement or removal.

How Search Engines Handle Duplicate Content

Search engines do not want to show duplicate results. When they detect similar content across multiple URLs, they try to choose one version to index and rank.

This decision is based on several signals, including:

- Canonical tags

- Internal linking

- External links

- Sitemap inclusion

- URL structure

- Content signals

- Page authority

If signals are unclear or conflicting, search engines may choose a version that is not ideal from an SEO perspective.

This is why duplicate content SEO focuses on clarity. The goal is to make the preferred version obvious.

Methods to Fix Duplicate Content

There are several ways to manage duplicate content, depending on the situation.

Canonical Tags

Canonical tags help indicate the preferred version of a page when multiple similar URLs exist. They are useful when duplicate URLs need to remain accessible.

For example, filtered pages or tracking URLs can canonicalize to the main version.

Redirects

Redirects are used when duplicate pages should no longer exist. A redirect sends users and search engines to the correct version.

This is useful for outdated URLs, merged pages, or site migrations.

Noindex Tags

A noindex tag tells search engines not to include a page in search results. It is useful for pages that should remain accessible but do not need to appear in search.

Examples include internal search results or low-value tag pages.

URL Parameter Control

Managing URL parameters helps prevent unnecessary duplicate pages. This can involve limiting indexation of parameter-based URLs or controlling how they are generated.

Internal Linking Consistency

Internal links should point to the preferred version of a page. Inconsistent linking can send mixed signals and reinforce duplicate URLs.

XML Sitemap Optimization

An XML sitemap should include only preferred, canonical URLs. Including duplicate URLs in the sitemap can create confusion.

Common Duplicate Content SEO Mistakes

One common mistake is ignoring duplicate content because there is no visible penalty. While there may not be a direct penalty, the indirect impact can still reduce performance.

Another mistake is relying on one solution for every case. For example, using canonical tags everywhere without considering whether a redirect or noindex would be more appropriate.

Other common mistakes include:

- Allowing parameter URLs to be indexed

- Including duplicate URLs in sitemaps

- Canonicalizing pages incorrectly

- Creating multiple pages targeting the same keyword

- Not handling HTTP, HTTPS, WWW, and non-WWW versions properly

- Leaving old URLs active after migrations

- Allowing trailing slash inconsistencies

- Ignoring internal linking inconsistencies

- Republishing content without canonical control

These issues often build up over time as a website grows.

How to Audit Duplicate Content

A duplicate content audit should identify where duplication exists and how it affects SEO performance.

Start by reviewing key page types, including product pages, category pages, blog posts, landing pages, and filtered URLs.

Then check for URL variations and parameter-based duplicates. Look for patterns across templates, not just individual pages.

A practical audit should answer:

- Are multiple URLs showing the same content?

- Are canonical tags implemented correctly?

- Are duplicate URLs indexable?

- Are internal links consistent?

- Are sitemaps including only preferred URLs?

- Are parameter URLs creating duplication?

- Are multiple pages targeting the same topic?

- Are redirects implemented where needed?

The goal is to reduce duplication and strengthen the preferred version of each page.

Duplicate Content SEO for Different Website Types

Different websites face different duplication challenges.

For ecommerce websites, managing product variants and filtered URLs is critical. Without control, duplication can scale quickly.

For blogs and publishers, tag pages, category pages, archives, and syndicated content need careful management.

For service-based websites, duplication often comes from creating multiple similar landing pages targeting slightly different keywords or locations.

For SaaS websites, duplication may come from documentation pages, feature pages, or template-driven content.

Each website type requires a strategy based on how content is structured and how users search.

How Long Duplicate Content Fixes Take

The impact of duplicate content fixes depends on how quickly search engines recrawl and reprocess the affected URLs.

Small changes, such as fixing canonical tags or updating internal links, may be reflected relatively quickly on frequently crawled pages.

Larger changes, such as consolidating many duplicate pages or restructuring URL systems, can take longer. Search engines need time to process redirects, update indexation, and reassign signals.

It is important to monitor progress rather than expect immediate results. Improvements in indexation, rankings, and crawl efficiency may appear gradually.

Practical Guidance for Duplicate Content SEO

Duplicate content SEO should be approached strategically, not reactively.

Start by identifying which pages are important for search visibility. Then make sure those pages are the clear, preferred versions.

Use canonical tags, redirects, and noindex directives where appropriate. Avoid using them blindly. Each situation should be evaluated based on user experience and SEO value.

Also focus on prevention. As a website grows, new templates, filters, categories, and parameters can introduce duplication. Clear URL rules, internal linking standards, and content planning can reduce future issues.

For long-term success, duplicate content should be managed as part of overall technical SEO, not as a one-time fix.

Conclusion

Duplicate content SEO is about controlling how similar content is presented and interpreted across different URLs. While duplicate content is common, unmanaged duplication can dilute signals, waste crawl resources, and reduce organic visibility.

The goal is not to eliminate every similar page, but to clearly define which version should be treated as the main one. This is done through canonical tags, redirects, internal linking, and clean URL structure.

A strong duplicate content strategy improves clarity for search engines, strengthens important pages, and supports long-term SEO performance.