Technical SEO: A Practical Guide to Building a Search-Friendly Website

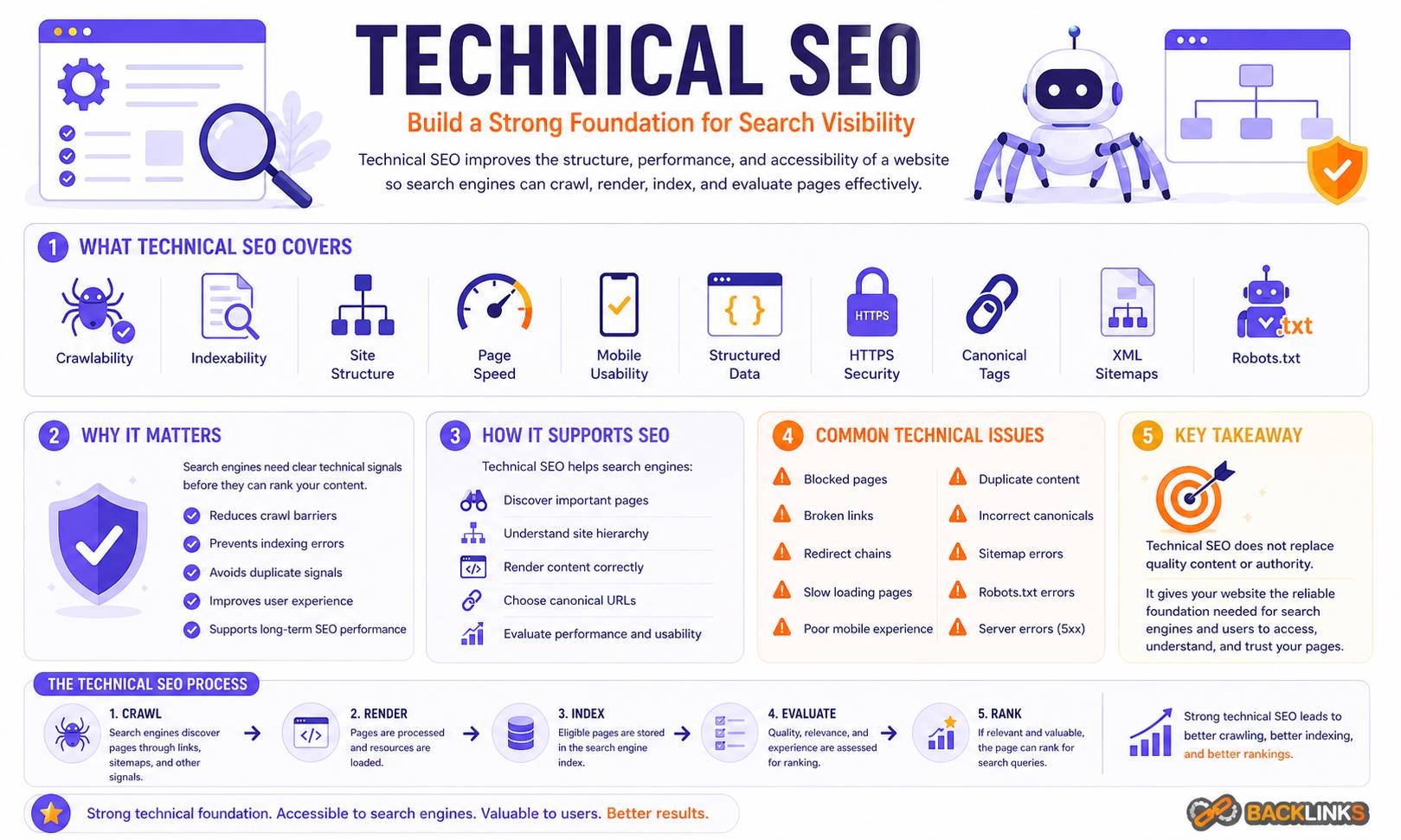

Technical SEO is the foundation that allows search engines to crawl, understand, index, and evaluate your website efficiently. Strong content and backlinks matter, but they cannot perform at their full potential if your website has technical problems that block discovery, slow down users, confuse search engines, or dilute authority across duplicate pages.

For many businesses, technical SEO feels complex because it sits between marketing, development, UX, analytics, and website management. It involves site architecture, crawling, indexing, page speed, structured data, mobile usability, internal linking, canonical tags, redirects, XML sitemaps, robots.txt files, and more. Each element matters, but the real value comes from understanding how they work together.

This guide explains what Technical SEO is, why it matters, how it works, and how to approach it strategically. It is written for business owners, marketers, SEO professionals, and website teams that want a practical understanding of how to make a website easier for search engines and users to access.

What Is Technical SEO?

Technical SEO is the process of optimizing the technical foundation of a website so search engines can crawl, render, index, and rank its pages effectively. It focuses on the infrastructure behind organic search visibility rather than the written content alone.

In practical terms, Technical SEO helps answer questions such as:

- Can search engines access the important pages?

- Are the right pages being indexed?

- Is the site structure easy to understand?

- Do pages load quickly and work well on mobile?

- Are duplicate or low-value URLs wasting crawl resources?

- Are redirects, canonical tags, and internal links handled correctly?

- Does structured data help search engines interpret the content?

Technical SEO does not replace content strategy, keyword research, or authority building. Instead, it supports them. A well-optimized website gives strong content a better chance to be discovered, understood, and rewarded.

Why Technical SEO Matters

Search engines need to process websites at scale. They do not simply “see” a website the way a person does. They crawl URLs, render pages, analyze links, evaluate signals, index selected pages, and decide which results are most useful for a search query.

If your technical setup creates friction at any stage, your organic performance can suffer.

A page may be well written but fail to rank because it is blocked from crawling. A product category may be valuable but ignored because canonical tags point elsewhere. A blog may have useful articles but weak internal linking, making deeper pages hard to discover. A website may attract traffic but lose conversions because pages are slow or unstable on mobile.

Technical SEO matters because it affects the full search journey:

- Crawling determines whether search engines can discover your URLs.

- Indexing determines whether those URLs can appear in search results.

- Site architecture influences how authority flows through the website.

- Performance affects user experience and engagement.

- Structured data can improve how search engines interpret your pages.

- Clean URL management reduces duplication and wasted crawl activity.

For larger websites, technical SEO becomes even more important. Ecommerce sites, publishers, marketplaces, SaaS websites, and multilingual websites often create many URL variations. Without strong technical controls, these sites can quickly develop crawl waste, duplicate pages, thin pages, redirect chains, and indexing problems.

How Technical SEO Works

Technical SEO works by improving the signals and systems search engines rely on when discovering and evaluating your website. It is not one single task. It is a set of connected practices that reduce friction and improve clarity.

The main process can be understood in four stages: crawlability, indexability, interpretation, and experience.

Crawlability: Helping Search Engines Discover Your Pages

Crawlability refers to how easily search engine bots can find and access the URLs on your website. If a page cannot be crawled, it cannot be evaluated properly for organic search.

Search engines discover pages through links, XML sitemaps, redirects, and previously known URLs. A clean internal linking structure helps them move through the site naturally. Important pages should not depend only on JavaScript interactions, search forms, or isolated navigation paths.

Several technical elements influence crawlability.

Internal Linking

Internal links help search engines understand which pages are important and how content is connected. A page that receives no internal links is much harder to discover and may appear less important.

For example, a website with a strong guide on SEO site architecture should link naturally to related pages about crawling, indexing, URL structure, and internal links. These connections help both users and search engines move through the topic logically.

Good internal linking uses descriptive anchor text, avoids excessive repetition, and supports the hierarchy of the site. It should not be treated as an afterthought added only after content is published.

XML Sitemaps

An XML sitemap lists important URLs that you want search engines to discover. It is especially useful for large websites, recently updated pages, and pages that may not receive many internal links yet.

A sitemap does not guarantee indexing. It is a discovery tool, not a ranking shortcut. The sitemap should include only canonical, indexable, valuable URLs. Including redirected, blocked, duplicate, or low-quality URLs creates mixed signals and reduces trust in the sitemap.

Robots.txt

The robots.txt file tells crawlers which areas of a website they are allowed or not allowed to crawl. It can be useful for preventing crawl waste on unimportant sections, but it must be handled carefully.

Blocking a page in robots.txt does not necessarily remove it from the index if search engines discover the URL through other signals. It also prevents search engines from seeing page-level directives such as noindex tags. For sensitive pages, access control is more appropriate than relying on robots.txt.

Indexability: Making Sure the Right Pages Can Rank

Indexability refers to whether a page is eligible to be stored in a search engine’s index and shown in search results. A page can be crawlable but not indexable.

Common indexability controls include meta robots tags, canonical tags, HTTP status codes, redirects, and content quality signals.

Meta Robots Tags

A meta robots tag can tell search engines whether a page should be indexed. The most common directive is noindex, which instructs search engines not to include the page in search results.

This is useful for pages such as internal search results, low-value filter combinations, staging content, duplicate utility pages, and thank-you pages. However, applying noindex to important pages by mistake can remove valuable content from search results.

Indexing rules should be audited regularly, especially after site redesigns, CMS changes, migrations, or template updates.

Canonical Tags

Canonical tags help search engines understand the preferred version of a page when similar or duplicate URLs exist. This is common on ecommerce sites, blogs with tracking parameters, paginated content, filtered pages, and syndicated content.

A canonical tag is a hint, not an absolute command. If the technical setup sends conflicting signals, search engines may choose a different canonical URL.

Good canonical implementation requires consistency. Internal links, sitemap URLs, redirects, hreflang tags, and canonical tags should all point toward the preferred URL version.

HTTP Status Codes

Status codes communicate what happened when a browser or crawler requests a URL. A working page typically returns a 200 status code. A permanently redirected page should return a 301. A missing page may return a 404 or 410.

Incorrect status codes can create serious SEO problems. A deleted page that still returns 200 may be treated as a soft 404. A temporary redirect used for a permanent move can weaken consolidation signals. A redirect chain can slow crawling and create unnecessary complexity.

Clean status code management is essential during website migrations, URL restructuring, and content pruning.

Site Architecture and URL Structure

Site architecture is the way pages are organized and connected across a website. Strong architecture helps users navigate naturally and helps search engines understand relationships between pages.

A good structure usually moves from broad topics to more specific pages. For example, a main page about Technical SEO may connect to supporting resources on:

- Crawlability and indexing

- XML sitemaps

- Robots.txt best practices

- Canonical tags

- Website speed optimization

- Structured data SEO

- Internal linking for SEO

- SEO audits

This structure gives users a clear path to deeper information and helps search engines interpret topical relationships.

URL Structure

URLs should be readable, stable, and aligned with the page’s purpose. A good URL does not need to include every keyword variation. It should be concise and easy to understand.

For example, /technical-seo/ is clearer than /blog/post?id=1847&utm_source=abc. Clean URLs are easier to share, easier to manage, and less likely to create unnecessary duplicate variations.

Changing URLs should not be done casually. If a URL already has rankings, links, or traffic, changing it requires a proper redirect strategy. Otherwise, the site may lose accumulated authority and create broken user journeys.

Website Speed and Page Experience

Website performance is a technical SEO concern because slow and unstable pages can harm user experience. Search engines want to send users to pages that are accessible, usable, and reliable.

Page speed is not only about passing a test score. It affects how people interact with the site. A slow ecommerce category can reduce product discovery. A slow lead generation page can reduce form submissions. A slow article can increase abandonment before the reader engages with the content.

Important performance areas include:

- Server response time

- Image compression and sizing

- JavaScript execution

- CSS delivery

- Caching

- Font loading

- Mobile performance

- Layout stability

The best performance work usually comes from collaboration between SEO, development, UX, and analytics teams. The goal is not to remove every design element, but to build pages that load efficiently while still serving the business purpose.

Mobile-Friendly Technical SEO

Search engines primarily evaluate many websites through their mobile experience. This means a page that works well on desktop but poorly on mobile may struggle.

Mobile SEO is not just responsive design. The mobile version should provide the same important content, links, structured data, metadata, and functionality as the desktop version. Hidden navigation, collapsed sections, slow scripts, intrusive popups, and broken layouts can all affect usability.

A mobile-friendly technical setup should make content easy to read, links easy to tap, forms easy to complete, and navigation easy to follow. For local businesses, ecommerce sites, and service websites, mobile usability can directly affect conversions as well as rankings.

Structured Data and Search Understanding

Structured data is a standardized format that helps search engines understand specific information on a page. It can describe products, reviews, FAQs, articles, organizations, breadcrumbs, events, recipes, and other entities.

Structured data does not guarantee enhanced search results. It also does not compensate for weak content. Its value is in clarity. When implemented correctly, it can help search engines interpret what the page is about and how different elements relate to each other.

Common structured data opportunities include:

- Article schema for editorial content

- Product schema for ecommerce pages

- Breadcrumb schema for site hierarchy

- Organization schema for business identity

- LocalBusiness schema for location-based businesses

- FAQ schema where the content genuinely includes relevant questions and answers

Structured data should match visible page content. Adding markup for content that users cannot see can create quality issues. It should also be tested after template changes, CMS updates, and redesigns.

Duplicate Content and Canonicalization

Duplicate content occurs when the same or very similar content appears on multiple URLs. This is not always a penalty issue, but it can create confusion and inefficiency.

Duplicate URLs may be created by:

- Tracking parameters

- Filtered navigation

- Sort options

- Printer-friendly pages

- HTTP and HTTPS versions

- Trailing slash inconsistencies

- Uppercase and lowercase URL variations

- Product variants

- Syndicated content

The main problem is signal dilution. If multiple URLs compete for the same purpose, search engines may not know which version to prioritize. Backlinks, internal links, and engagement signals may also become split across variations.

Technical SEO solves this through consistent canonical tags, redirect rules, parameter handling, sitemap hygiene, and internal linking discipline.

Redirects and Website Migrations

Redirects guide users and search engines from one URL to another. They are essential when pages are moved, merged, deleted, or renamed.

A 301 redirect is commonly used for permanent moves. A 302 is used for temporary changes. Redirects should point to the most relevant live destination, not simply to the homepage.

Website migrations are one of the highest-risk technical SEO projects because they often involve changes to URLs, templates, internal links, content, metadata, structured data, and navigation at the same time.

A careful migration should include:

- A full URL inventory

- Redirect mapping

- Benchmarking before launch

- Testing in staging

- Crawl checks before and after launch

- Sitemap updates

- Internal link updates

- Monitoring for indexation and traffic changes

Poor migration planning can cause significant ranking drops. Recovery is possible, but it is usually more expensive than preventing the problem in the first place.

International and Multilingual Technical SEO

Websites targeting multiple countries or languages need additional technical controls. The most common is hreflang, which helps search engines serve the correct language or regional version of a page.

Hreflang implementation must be precise. Each language version should reference the others correctly, use valid language and region codes, and point to indexable canonical URLs. Mistakes can cause the wrong version of a page to appear in search results.

International SEO also requires decisions about URL structure. Common options include country-code domains, subdomains, or subdirectories. Each approach has trade-offs related to authority, management, localization, and analytics.

Technical SEO for international websites should work closely with content localization. A translated page is not always enough. Search behavior, terminology, currency, examples, and user expectations may differ by market.

Common Technical SEO Mistakes

Many technical SEO problems come from small decisions that accumulate over time. A website may not have one obvious issue, but dozens of minor problems can reduce search performance.

Blocking Important Pages

Important pages may be blocked through robots.txt, noindex tags, incorrect canonical tags, or authentication requirements. This often happens after development work, staging changes, or template updates.

The result is simple: pages that should rank may not be eligible to appear in search results.

Creating Too Many Low-Value URLs

Faceted navigation, internal search, filters, tags, and parameters can generate thousands of URL variations. If these pages are thin, duplicate, or not strategically useful, they can waste crawl resources and dilute quality signals.

This is especially common on ecommerce and publishing websites.

Ignoring Internal Links

Some websites rely heavily on navigation menus and forget contextual internal links within content. Contextual links help search engines understand relationships between pages and help users continue learning.

For example, a section about page speed should naturally point readers toward a deeper guide on website performance for SEO. This is useful, not forced.

Using Canonical Tags Incorrectly

Canonical tags are often added automatically by CMS platforms or plugins, but automatic does not always mean correct. A page may canonicalize to the wrong URL, point to a redirected page, or conflict with internal links and sitemap entries.

Canonical errors can remove pages from competition or consolidate signals in the wrong place.

Treating Technical SEO as a One-Time Fix

Technical SEO is not only a launch checklist. Websites change constantly. New pages are added, plugins are updated, products are removed, templates are redesigned, and tracking scripts are installed.

Without regular monitoring, technical issues return.

How to Apply Technical SEO Strategically

A good Technical SEO process starts with business priorities, not only tool exports. SEO crawlers, log files, Search Console data, analytics platforms, and performance tools can reveal issues, but not every issue has the same impact.

The right question is not “How many errors did the audit find?” The better question is “Which technical issues are limiting the pages that matter most?”

Start With Important Page Types

Review the pages that drive or should drive business value. These may include service pages, product categories, high-intent landing pages, location pages, major guides, and comparison pages.

For each page type, assess whether search engines can crawl, index, render, and understand the content. Then check whether users can access and use the page smoothly.

Separate Critical Issues From Minor Issues

Not all technical warnings are urgent. A missing image alt attribute on a decorative icon is not the same as a noindex tag on a revenue-driving page.

Prioritize issues based on impact, scale, and difficulty. A problem affecting one low-value page may not deserve immediate development resources. A template-level issue affecting thousands of indexable pages may be urgent.

Work With Developers Early

Technical SEO often requires implementation support. SEO recommendations should be specific, testable, and realistic. Developers need clear acceptance criteria, examples, and expected outcomes.

Instead of saying “fix crawlability,” define the exact issue: which URLs are affected, what the current behavior is, what the desired behavior is, and how success will be tested.

Monitor After Changes

Every technical change should be monitored after implementation. Check crawl data, server responses, Search Console reports, index coverage, page performance, rankings, and organic traffic patterns.

Some effects appear quickly, such as fixing a broken redirect. Others take longer because search engines need to recrawl and reassess the affected URLs.

Technical SEO Audit Checklist

A Technical SEO audit should review the systems that affect crawlability, indexability, performance, and search understanding.

Key areas to assess include:

- Crawl status and crawl depth

- Indexable versus non-indexable URLs

- Robots.txt rules

- XML sitemap quality

- Canonical tag consistency

- Redirects and redirect chains

- Broken links and 404 pages

- Duplicate content patterns

- Internal linking structure

- Page speed and Core Web Vitals

- Mobile usability

- Structured data validity

- Hreflang setup, if relevant

- Pagination and faceted navigation

- Log file patterns, if available

- HTTPS and security issues

- Metadata templates

- JavaScript rendering risks

The goal of an audit is not to produce a long list of problems. The goal is to identify the issues that matter, explain why they matter, and define a clear path to improvement.

How Long Technical SEO Takes to Show Results

Technical SEO results depend on the type of issue, the size of the website, and how quickly search engines recrawl affected pages.

Some fixes can show improvements quickly. For example, resolving a robots.txt block or removing an accidental noindex tag may allow important pages to return to the index once they are recrawled.

Other improvements take longer. Site architecture changes, internal linking improvements, performance optimization, and large-scale canonical cleanup may need repeated crawling and evaluation before results become visible.

For most websites, Technical SEO should be treated as an ongoing process rather than a single project. Initial audits and fixes create the foundation, but regular monitoring protects performance as the website grows.

Technical SEO and the Broader SEO Strategy

Technical SEO works best when it supports a broader SEO strategy. A technically clean website with weak content may still struggle. A content-rich website with technical problems may fail to reach its potential.

The strongest results usually come when technical improvements are aligned with:

- Keyword research

- Content planning

- On-page optimization

- Internal linking

- Digital PR and link earning

- Conversion optimization

- Analytics and reporting

For example, a business investing in keyword research should make sure the pages targeting those keywords are crawlable, indexable, internally linked, and fast. A company building content optimization workflows should also ensure its templates support proper headings, metadata, schema, and mobile usability.

Technical SEO provides the infrastructure. Content and authority build on top of it.

Conclusion

Technical SEO is the foundation of sustainable organic search performance. It helps search engines discover the right pages, understand their purpose, index them correctly, and deliver them to users in a reliable experience.

The most effective approach is strategic, not mechanical. Technical SEO should focus on the pages that matter, the issues that limit performance, and the systems that help a website grow without creating unnecessary search problems.

A strong technical foundation will not guarantee rankings on its own. But without it, even excellent content can be held back. For businesses serious about organic growth, Technical SEO is not optional maintenance. It is a core part of building a website that search engines and users can trust.